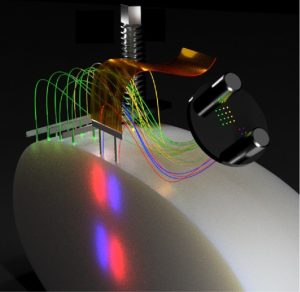

Schematic illustration of a possible fiber and electrode arrangement. The thin fibers allow both spatial flexibility and high-quality extracellular recordings. Courtesy of Ilka Diester.

Prof. Dr. Ilka Diester

At the Federation of European Neuroscience Societies Forum (FENS; 9th–13th July 2022) held in Paris (France), we chatted with Diester about the different techniques she uses in her research, including one-photon imaging, and talked about what virtual reality (VR) does to our brains.

In a talk, I presented optimized probes for in vivo optogenetics and electrophysiology and the attitudes to new techniques with multiple fibers with which you can control different brain sides. These fibers can be implanted separately from a laminar electrophysiological probe. These fibers are super thin and destroy less tissue. Therefore, they allow ultrafast optogenetic silencing because they can be implanted very close to intact cell bodies [1].

In the talk, I also presented an optoelectrofluidic probe, a multifunctional probe combining the injection system with the recording system and the stimulation system, which then leads to very precise expression profiles of the opsin and simplifies surgeries [2].

Lastly, I also presented a technique for training students with 3D printed skulls and a fake brain [3] – I actually received the most questions about that aspect of the talk, and students were really excited about this technique.

We also had one poster about a technique recently published in Neuron called FreiPose, which is a tool for 3D, marker-free tracking of animals [4]. We had another poster about an open-source toolbox for one-photon imaging and behavioral flexibility tasks.

Schematic illustration of a possible fiber and electrode arrangement. The thin fibers allow both spatial flexibility and high-quality extracellular recordings. Courtesy of Ilka Diester.

Optogenetics is one of our major tools. We use this to either control local activity by inhibiting or exciting it or, increasingly, to control pathway-specific activity using a Cre-Lox system. In this system, a Cre virus travels retrogradely and a DIO construct is used, which is under the control of the opsin.

We also use electrophysiology, where horizontal arrays from multiple electrodes are implanted across the cortex or the deeper brain structures, or neuropixel probes, or lamina probes.

We have quite a few studies in mice about one-photon imaging with miniscopes as well as two-photon imaging in slices or in vivo. For the in vivo part, we are particularly excited about the holography system, which allows us to write holographic patterns (basically, writing physiologically meaningful patterns) into the brain.

We often work with standard tools, and then we realize that something is not optimal. For example, when looking at imprecise expression properties, if you just use the standard injection systems, you sometimes get a backflow of the viral vector when you retract the needle.

Another challenge is that whenever you introduce something to the brain, it causes damage, particularly when you have to go to deeper structures in the brain or remove the dura (the thick outer membrane of the brain) or find your way around blood vessels. Thin fibers are helpful for this.

Finally, optoelectronic artefacts are a challenge, as they can distort signals. New materials sometimes help, for example, graphene seems to be one of the solutions at the moment.

As I am a neuroscientist, not a neuroengineer, I talk to my colleagues from the Engineering Department whenever I develop probes, who, conveniently, sit in the same building as us. In the Intelligent Machine Brain Interfacing Technology (IMBIT) building, we have neurobiologists, neuroengineers, computer scientists and AI specialists. I also have some really talented students in the lab who are driven to develop these tools further. We work together with engineers to come up with a good solution to these problems. Most of the papers I have recently published are in collaboration with engineers because it is super fruitful.

There are three different topics in BrainLinks-Brain Tools, which is closely linked to IMBIT. I am the spokesperson for NeuroCore, which is about basic neuroscience. There is also NeuroProbes, which is about probe development including electrophysiology probes, optogenetic probes, electrocorticography arrays and so on. Finally, we have an area called NeuroRobotics, which is in the field of AI and closely links clinics and brain–machine interfacing technology. In each of these research areas, we have multiple projects and have some large equipment that we use.

In my area, the NeuroCore, we have a research unit that we call the OptoRoboRat. This is like a combination of a two-photon microscope with a holographic unit with which we can write in patterns. This is combined with VR, so mice run on a wheel and think they are moving. In parallel, we have a setup of a freely moving mouse experiencing the same thing. We have the option to transfer the mouse between the two setups, so we can compare the neural activity in VR and in real reality. This is a fascinating question for me: what does VR do to our brain or to the mouse brain? Some evidence has shown that VR causes reduced neuron activity compared to real reality. I think this is a very relevant question for society right now because we are emerging more and more into VR, but what does it do to us? This is underexplored. This is linked to the robotic group in BrainLinks-Brain Tools. We have a little four-legged robot, which looks like a rat or a dog, and the idea is that this robot collects information about the environment and feeds it back into the mouse on the scope while the neural activity of the mouse will control the robot. It is a closed loop system.

We also have the Soft-FIB, which is for analyzing the tissue–probe interaction. With this device, we can cut through brain implants with an electrode, without removing the electrode, and then we can look at the intersection. This is important because we know that there is glial scarring and some immune response in the brain, despite the fact it does not really have an immune response, as there are astrocytes present.

Finally, there is the machine–brain interface focus, where we have a very advanced recording technique in humans that is linked to a robot arm. Clinicians and AI researchers are developing some new ideas and tools at this intersection.

At the moment, my lab has two streams: a cognitive stream and a motor control stream. My goal is to bring these two sides together because, of course, they are linked. Whenever we have a cognitive decision, or behavioral flexibility that then leads to a cognitive decision, this has to be translated into a motor output. But how is the information transferred? This sounds super simple, but the answer is not clear, and this is the future direction of my lab.

At the moment, I am most excited to take two-photon imaging into freely moving animals, which has recently been performed and published. I think the next step, maybe even sooner than 5 years, is to also do holographic stimulation in freely moving animals. Neuroengineers have come up with some intelligent solutions on how to do that. This would be super powerful because then you can do whatever you do at the moment in a fixed mouse, but in a freely moving mouse. Everybody who does behavior analysis or training knows that it is easier to train a mouse in a freely moving condition. They just perform better. Further, more naturalistic tasks are possible under freely moving conditions.

To the press release of BioTechniques